.png)

Most AI search advice focuses on tactical fixes. The real failures are strategic — and they start with optimizing the wrong layer entirely.

Roughly 80% of URLs cited by ChatGPT, Gemini, and Copilot don't rank anywhere in Google's top 100. You can have a perfectly optimized site, strong domain authority, great rankings, and still be invisible in the AI answers your buyers are reading.

The mistakes below aren't about missing a schema tag or forgetting an FAQ block. They're about fundamental misalignments in how teams think about AI visibility: what to prioritize, what to measure, and where to show up.

In this post:

The most expensive mistake teams make isn't a bad tactic. It's a bad mental model.

When AI search started dominating the conversation, a lot of teams responded in one of two ways: either they declared SEO dead and started building entirely new programs from scratch, or they dismissed the whole thing as hype and changed nothing.

Both responses get it wrong.

A lot of what works for traditional SEO still works for AI search. Clear, well-structured content. Topical authority. Technical health. Strong internal linking. None of that stopped mattering. Google search volume is still growing, and AI Overviews only appear in roughly 16–25% of searches depending on month and dataset which means the majority still resolve the way they always have.

What changed is specific and additive: there's a new layer (the citation layer) where LLMs decide which brands to mention in their answers. That layer runs on different signals than traditional rankings, and it requires a different motion to win. But it doesn't replace the foundation you've already built.

If you're tearing down your SEO program to "pivot to AI search," you're overreacting.

If you're ignoring the citation layer because "SEO still works," you're underreacting.

The right move is to keep the foundation and add the one new motion that actually changed.

So what does that new motion actually require? That's where the next mistake comes in because most teams that do add something new end up adding it in the wrong place.

This is the big one. The mistake that makes everything else harder.

Most AI search programs focus almost entirely on on-site optimization: restructuring content for extractability, adding schema markup, building FAQ sections, improving page speed. All of that is useful. None of it is the most important lever.

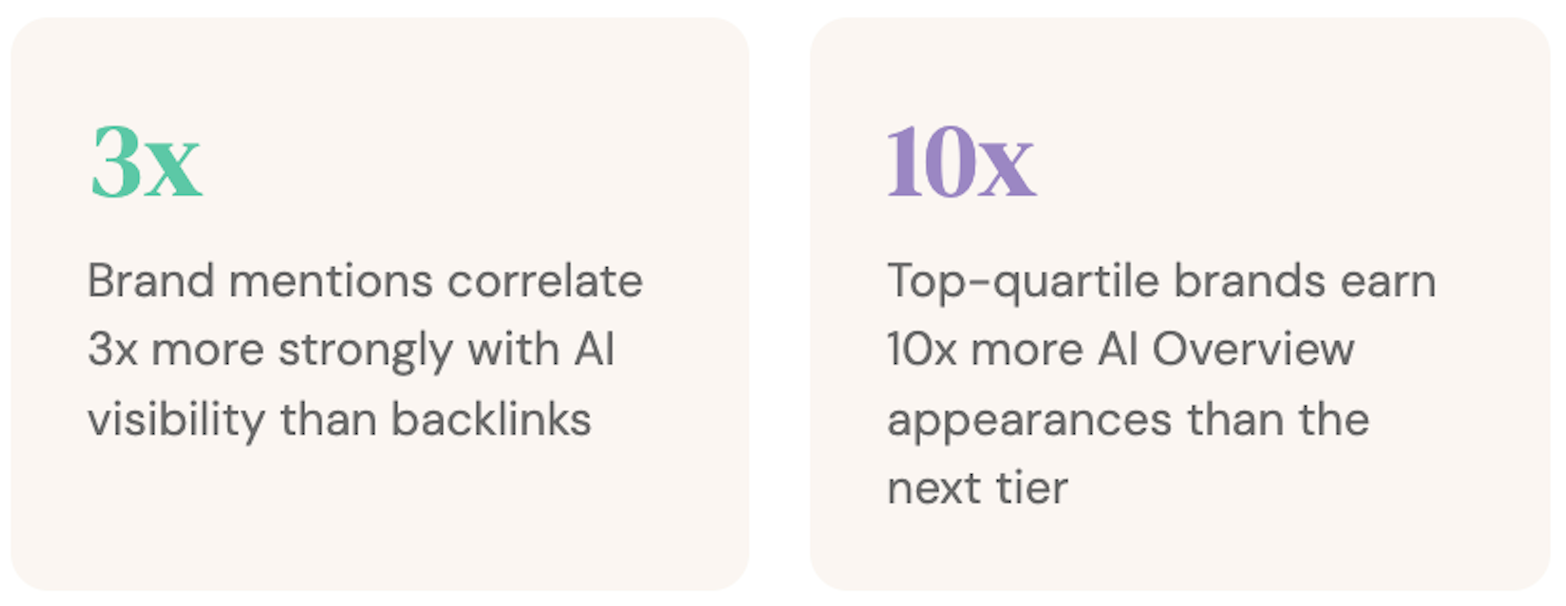

Ahrefs studied 75,000 brands and found that the factor most correlated with AI Overview appearances isn't domain authority, backlinks, or content volume; it's brand web mentions. In other words, how often your brand is talked about across the web, on sites you don't own. That correlation sits at 0.664, roughly 3x stronger than backlinks at 0.218.

Not to mention that brands in the top 25% for web mentions earn 10x more AI Overview appearances than brands in the next quartile down — an average of 169 AI Overview citations versus just 14. And 26% of brands have zero AI Overview mentions at all, regardless of how well their sites rank.

Below that threshold, you're largely invisible… no matter how good your site is.

The takeaway is that if you're spending 100% of your AI search budget on your own site, you're optimizing the wrong layer. The citation layer lives off your domain. That's where the biggest gains are.

86% of the top-mentioned sources are not shared across ChatGPT, Perplexity, and AI Overviews. Out of the top 50 most-cited domains on each platform, only 7 appear on all three lists. Each platform has different source preferences — and checking your visibility on one doesn't tell you much about the others.

ChatGPT leans on Wikipedia (16.3% of mentions), publisher content, and news outlets. It has licensing deals with many top-cited sources and tends to cite pages that don't rank well in Google — only about 10% of its short-tail citations overlap with Google's top 10.

Perplexity pulls heavily from YouTube (16.1% of mentions), Reddit, and a broader international source base. It's the most SEO-aligned AI assistant, with 28.6% of its cited URLs ranking in Google's top 10, but that still means over 70% come from elsewhere.

Google AI Overviews distribute citations more evenly across user forums and niche sites, with Reddit (7.4%) and Quora (3.6%) ranking unusually high. AI Overviews also show the strongest correlation with traditional rankings, but that correlation weakens once you move to AI Mode.

Checking your visibility on one platform and calling it done is like checking your Google ranking and assuming you rank the same on Bing.

The fix isn't to be everywhere at once. It's to know which platforms and source types matter for your specific category and build presence across them deliberately.

But even teams that get their platform coverage right often stumble on the next question: how do you actually know if it's working?

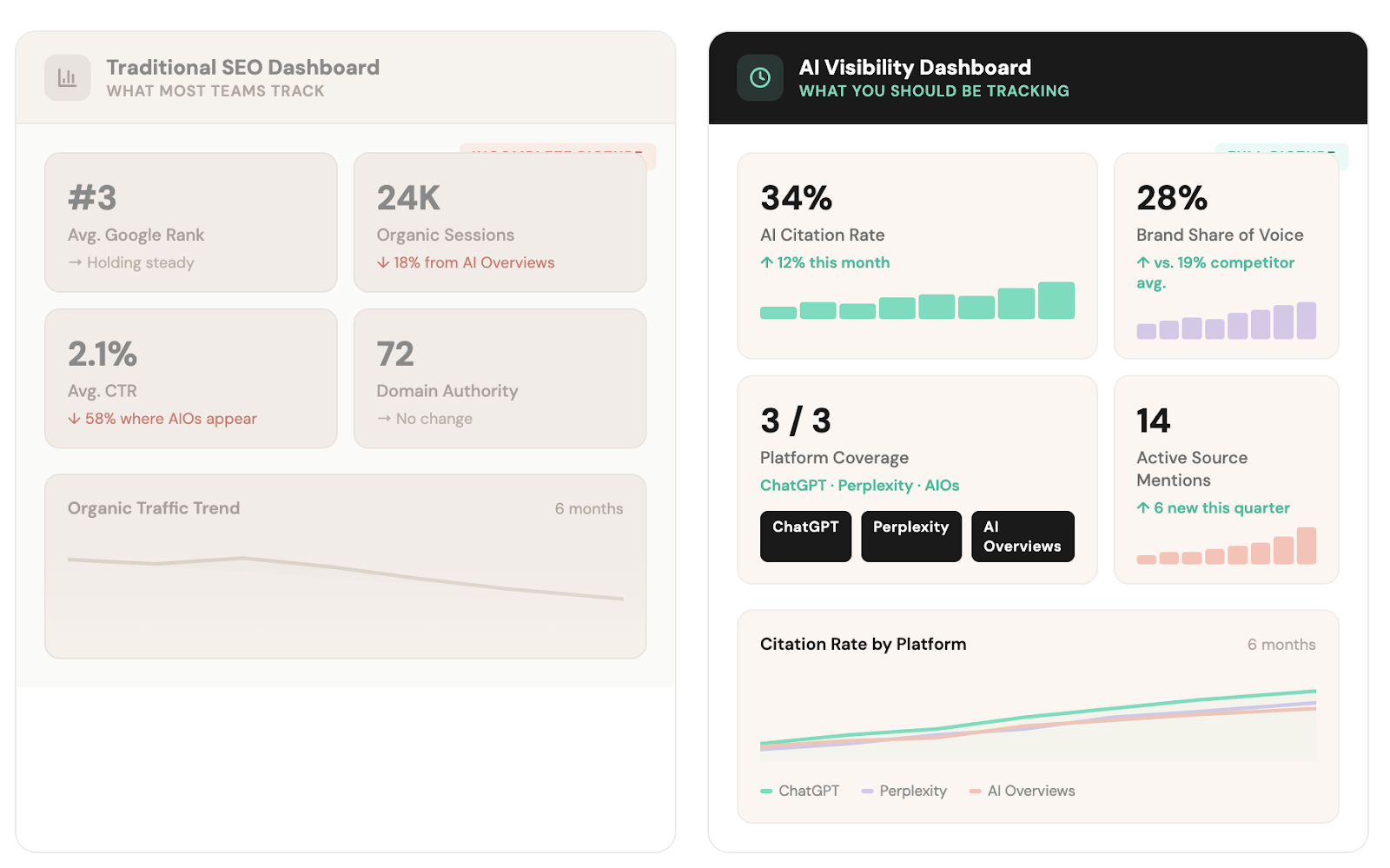

AI Overviews now reduce CTR by 58% for top-ranking pages, up from 34.5% when Ahrefs first measured in April 2025. You can rank #1 on Google and be completely absent from the AI answer that sits above your result.

If you're only measuring clicks and rankings, you're measuring a shrinking portion of the picture.

What matters in AI search is different: citation rate across platforms, brand mention share of voice, which sources are surfacing your brand, sentiment of those mentions, and how you compare to competitors in AI-generated recommendations.

These metrics don't show up in Google Search Console. Most teams don't track them at all. In fact, McKinsey's CMO survey shows us that just 16% of brands systematically track AI search performance.

This isn't a failure of intelligence, it's a gap the industry hasn't closed yet. According to a 2025 B2B CMO Pulse survey from Modus and Semrush, 85% of marketing leaders view GEO as a critical priority, but nearly half admit they lack clear metrics to measure success.

Start with what you can do now: run your top 10–20 buyer-intent prompts through ChatGPT, Perplexity, and Google AI monthly. Record who shows up, what sources are cited, and whether you're among them.

You can do this manually with our spreadsheet template or use a tracking tool - here are some we recommend. That simple spreadsheet will tell you more about your AI visibility than any traditional dashboard.

AI answers are far more volatile than traditional search results. If you treat AI visibility as a one-time project, you'll lose whatever ground you gain.

Ahrefs studied AI Overview volatility and found that AI Overviews have a 70% chance of changing from one observation to the next for the same query. When the answer does change, 45.5% of the cited sources are replaced with entirely new URLs. That's roughly one citation swap every time a query is re-run.

Compare that to traditional search, where a #3 ranking might hold for months. In AI search, you can be in the answer on Monday and gone by Thursday.

The underlying meaning of the answer stays consistent. Ahrefs measured a 0.95 cosine similarity score between consecutive AI Overviews, meaning the conclusion barely changes even when the sources rotate constantly.

This is actually useful to know: it means models keep recommending the same types of brands, but they keep pulling from a rotating pool of sources to do it. If you're only in one or two of those sources, your visibility becomes a coin flip.

Freshness matters, too. Content with statistics, citations, and quotations achieves 30–40% higher visibility in AI responses, and pages updated within two months earn 28% more citations than older content. That applies to the third-party sources you're mentioned in, not just your own site.

If the listicle that mentions your brand hasn't been refreshed in six months, it may be losing its citation power and taking your visibility with it.

AI search measurement is still messy. There's no equivalent of Google Search Console that gives you a clean, complete picture of your AI visibility. Some teams look at this and decide to wait.

That's a mistake — because your competitors aren't waiting. 54% of US marketers plan to implement GEO within 3–6 months, according to the Scribewise GEO Readiness Report. The GEO services market was already valued at $886 million in 2024 and is projected to reach $7.3 billion by 2031. This isn't a niche experiment anymore.

And the brands that start now build compounding advantages. Citation footprints grow over time. The mentions you place today become part of the live index that LLMs pull from tomorrow. Measurable results can show up within months from just a handful of well-placed mentions.

In fact, when we ran a small scale test with an SEO agency called Graphite, we started by securing 6 strategic placements and they saw 33% increase in AI visibility (up from 0%, mind you).

The only thing coming between you and seeing an impact is getting started.

If you've read this far, the strategy is probably clicking: get your brand mentioned in the off-site sources that LLMs trust, across multiple platforms and source types, and keep refreshing that presence over time.

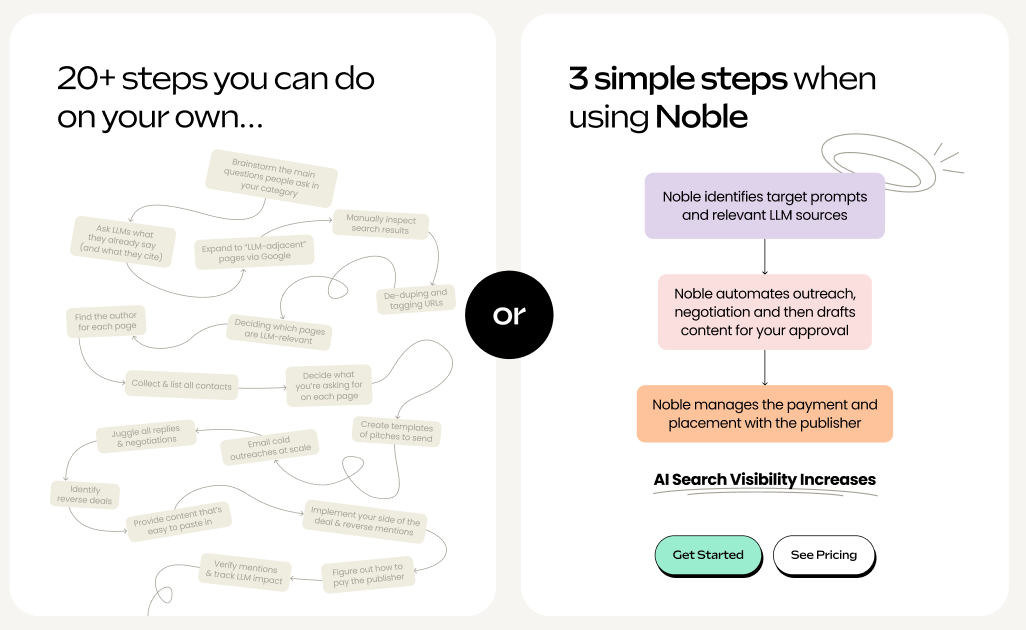

The strategy is clear… But it’s the execution where teams stall.

Getting into the right sources requires identifying which articles, listicles, and roundups LLMs are citing for your category. Then reaching out to each publisher. Negotiating terms. Getting copy approved. Managing payment. Tracking whether the mention actually went live. Monitoring whether it's still driving citations months later.

Per mention, that's 20+ manual steps and up to 10 hours of work per mention.

Most marketing teams are already stretched. Adding a labor-intensive outreach motion on top of existing SEO, content, and paid programs isn't realistic... especially when the process needs to be ongoing, not a one-time effort.

This is what Noble was built for. Noble automates the outreach, negotiation, and payment required to get your brand placed in the sources that power AI answers across Google, ChatGPT, and Perplexity.

→ Here’s how it works

About Noble: Noble is an AI search platform that automates the outreach, negotiation, and payment required to get your brand mentioned in the sources LLMs cite. The result: you show up in AI-generated answers. Book a demo here.

GET DISCOVERED ON