.png)

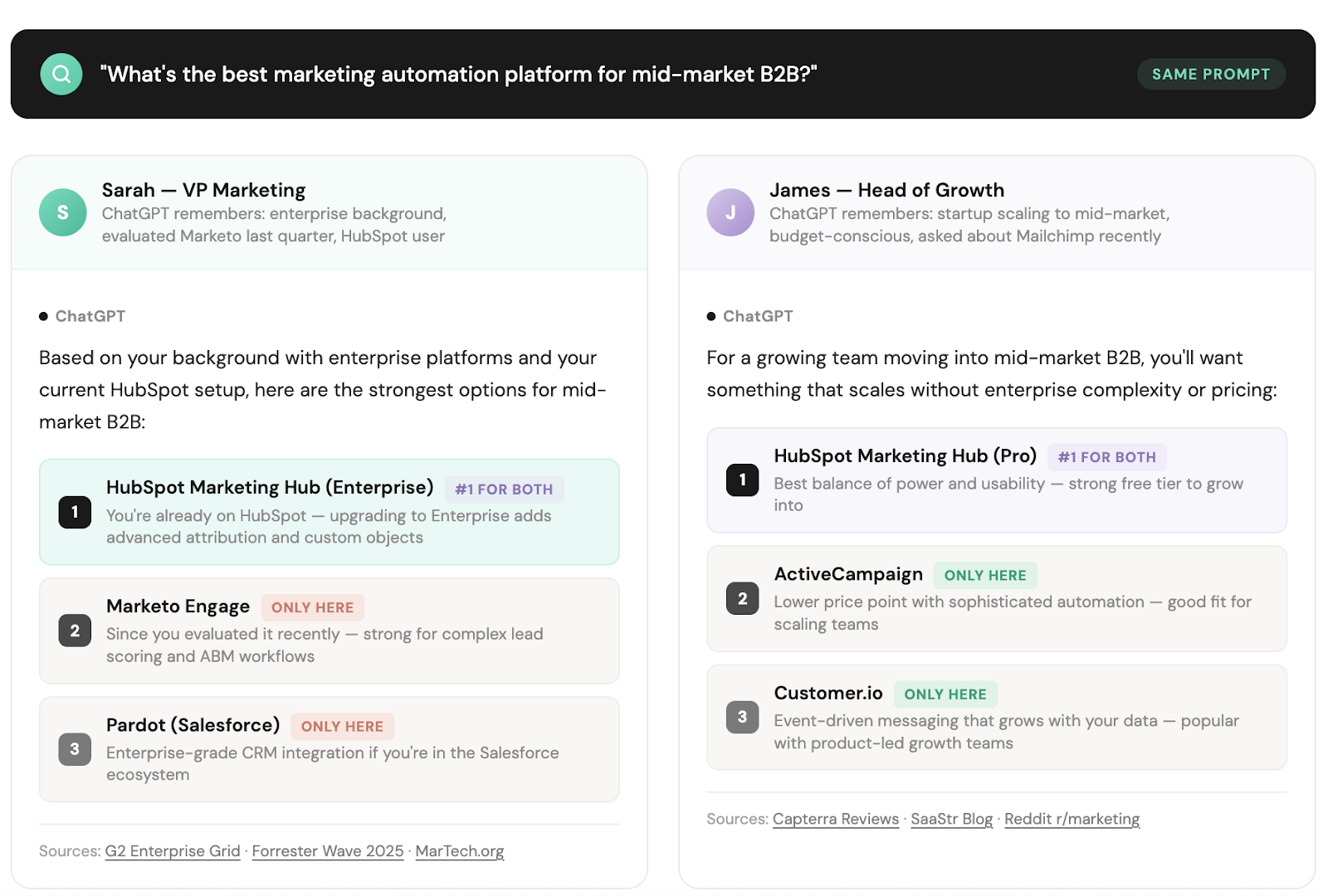

ChatGPT, Google, and Perplexity are now tailoring answers to each user. There's no single "answer" to optimize for anymore — which makes where your brand gets mentioned more important than ever.

TL;DR: AI search results are no longer universal. ChatGPT, Google AI Mode, and Perplexity now personalize answers based on user memory, context, and history meaning two people asking the same question can get different brand recommendations. You can't optimize for one answer. You need to be present across more of the sources these models draw from.

You ask ChatGPT for a project management tool recommendation and get one set of answers. Your coworker asks the same thing and gets a slightly different list, in a different order, with different sources cited. It's not a glitch. It's personalization — and it's already live across every major AI search surface.

In April 2025, OpenAI launched Memory with Search. If you have memory enabled, ChatGPT rewrites your prompts before searching using what it knows about you from past conversations. "Restaurants near me" becomes "vegan restaurants in San Francisco" if ChatGPT remembers those details.

A user who's been asking about enterprise compliance tools for weeks will get different vendor recommendations than someone exploring startup-stage CRMs for the first time. Same question. Different answer.

At Google I/O 2025, Google announced Personal Context for AI Mode. In early 2026, it shipped as "Personal Intelligence." AI Pro and Ultra subscribers can now connect Gmail and Google Photos to AI Mode, with more Google services expected to follow.

Search responses are now shaped by purchase history, travel confirmations, brand preferences, and personal context. Google's demo showed a user asking about buying a coat and the answer factored in brands they'd bought before, a flight confirmation to Chicago in their inbox, and March weather data. All automatic.

Apply that to a B2B buyer asking about "best marketing automation platforms." The answer is shaped by their recent vendor emails and past research.

Perplexity personalizes its Discover tab based on your full search and chat history, building an ongoing profile of your interests. If you've been researching SEO tools for weeks, your Perplexity feed looks very different from someone exploring design software.

87% of B2B software buyers say AI chats are already changing how they research vendors. Half now start their buying journey in an AI chat instead of Google, a 71% jump from just four months earlier. Those buyers aren't all seeing the same results anymore.

Your brand is either showing up in those personalized results… or it's not.

When answers are personalized per user, the sources those answers draw from also shift per user. You can't predict which source any given buyer's personalized answer will cite and the model is making that call based on context you don't control.

This fragmentation was already a challenge before personalization. An analysis of 680 million citations found that only 11% of domains are cited by both ChatGPT and Perplexity.

The divergence extends to individual URLs where only 12% of links cited by AI assistants also appear in Google's top 10 for the same query. Traditional search visibility and AI search visibility are increasingly separate problems. Personalization adds another layer of variation on top.

The mental model shift: instead of optimizing one page to win one answer, you're building a portfolio of brand mentions across many trusted sources, so you're present regardless of which sources any given answer draws from.

On-site optimization is still the floor.

But getting into the actual answer now requires being present across more of the citation sources the model pulls from. We wrote about this shift in detail here.

More answer variations means more citation sources getting surfaced across your total addressable audience. The brands that win aren't the ones optimizing one page perfectly, they're the ones with the widest footprint in the sources LLMs trust.

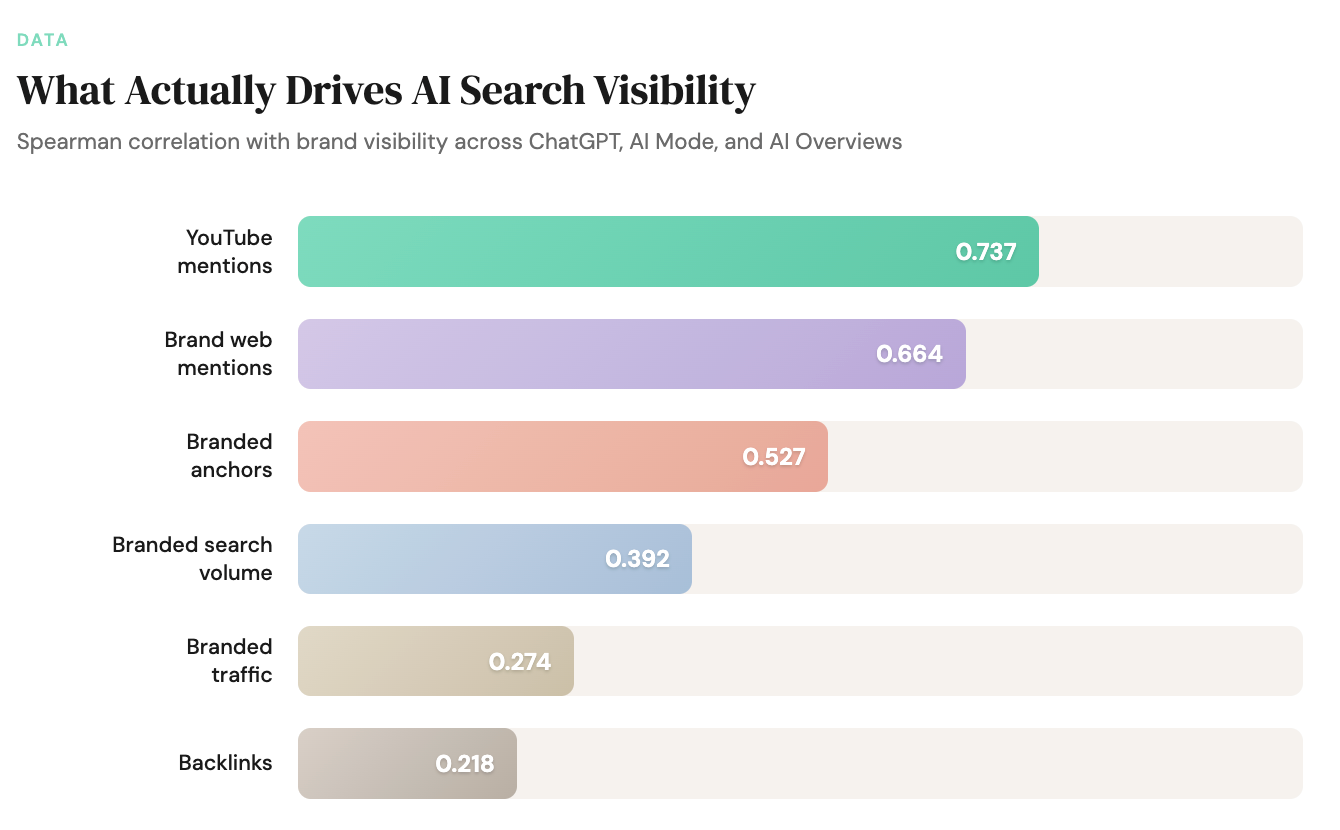

Ahrefs studied 75,000 brands and found that the factor most correlated with AI Overview appearances isn't domain authority or backlinks — it’s brand web mentions (correlation: 0.664, roughly 3x stronger than backlinks at 0.218). Other sites talking about you.

There's a cliff effect: brands in the top 25% for web mentions earn 10x more AI Overview appearances than brands in the next quartile. Below that threshold, you're largely invisible no matter how strong your site is.

Personalization amplifies this. When the same prompt generates different answers for different users (pulling from different source pools based on their context) the only reliable strategy is breadth.

Breadth looks like your brand being mentioned in the third-party sources that power AI answers: category listicles, best-of roundups, comparison guides, analyst write-ups, trade editorial, YouTube videos, and Reddit threads.

Ahrefs' expanded study found YouTube mentions (meaning any time a brand name appears in a video title, transcript, or description) show the highest correlation with AI visibility (~0.737) across ChatGPT, AI Mode, and AI Overviews alike. Reddit and Quora also surface heavily. These aren't side channels anymore.

Each platform draws from different wells:

The good news: dominant brands tend to surface across all three platforms (brand overlap correlation: 0.75–0.82).

Build enough mention breadth and you win everywhere, not just on one platform.

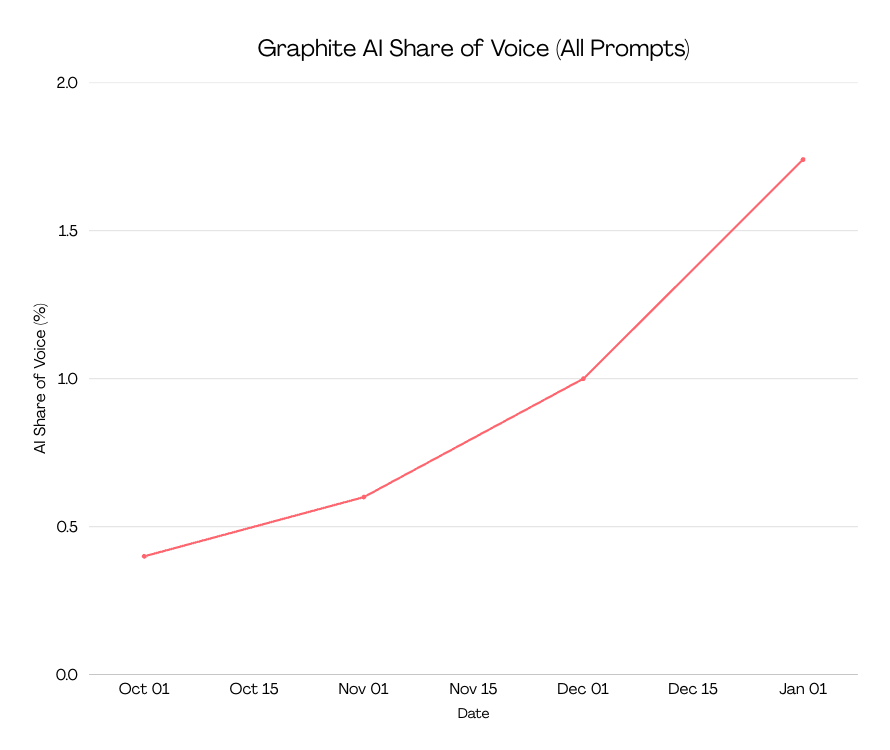

We saw this directly in Noble's work with Graphite. Six strategic mentions in third-party articles that LLMs cite took Graphite's visibility in "best SEO agencies" AI answers from 0% to 33%. Overall AI share of voice increased roughly 4.5x.

In a personalized world, those 6 sources surface for different user profiles at different times which means the total reach is broader than what a single-answer model would suggest.

Nothing here requires scrapping your SEO program. It's simply an expansion of what you're already doing.

This is the step most teams skip. Don't just run your buyers' prompts once and screenshot the results. Run them with different framing (i.e., as a startup founder, as an enterprise buyer, with different industry context). See how the answers change.

Which brands appear and disappear? Which sources get cited in each variation? The gap between a cold prompt and a personalized user's results is your real exposure risk.

Tools: ChatGPT (with and without memory enabled), Perplexity, Google AI Mode. Manual, but eye-opening.

And don't treat it as a one-time exercise. Research from Profound found that 40–60% of AI citations change month to month. Your audit needs to be recurring.

Go beyond the top 5 sources. In a personalized world, sources in positions 6–20 matter almost as much because any of them could surface for a specific user's context.

Identify the long tail: category listicles, best-of roundups, comparison guides, analyst write-ups, YouTube reviews, Reddit threads, Quora answers, trade editorial. Cross-reference across platforms, a source invisible on ChatGPT might be highly cited on Perplexity.

Tools like the ones we list in another blog post here can accelerate this process by showing you exactly where competitors are being cited and you're not.

This is where the real work lives… and where most teams get stuck.

The goal isn't a backlink. It's a relevant, contextual mention in a source the model trusts. Being cited alongside the right competitors and topics is how models learn when to surface your brand.

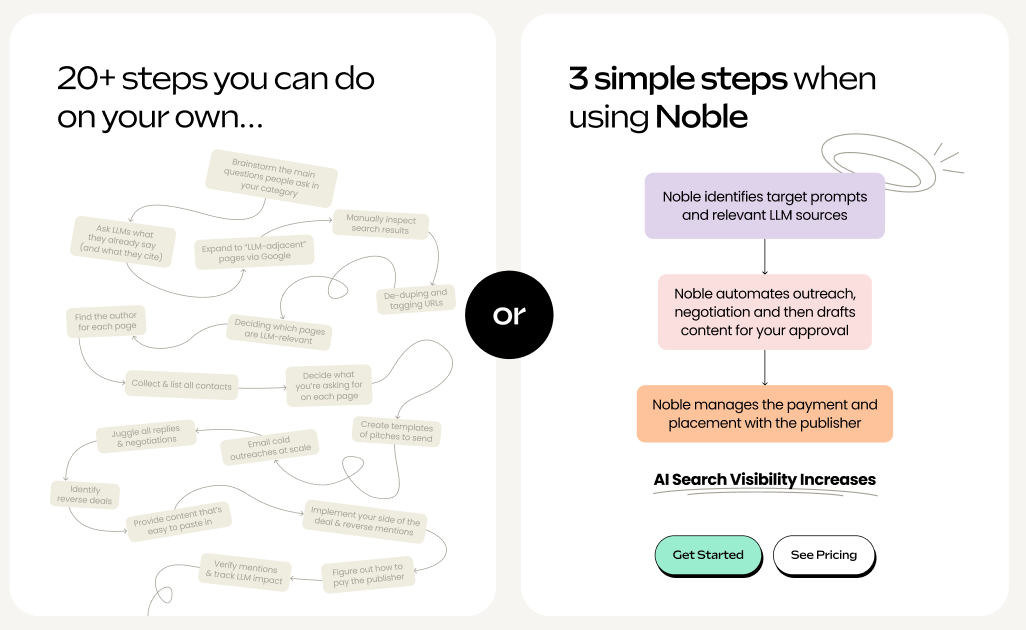

Each mention requires identifying the gap, reaching the publisher, negotiating terms, drafting content for approval, processing payment, and tracking placement.

One mention can take up to 10 hours when done manually across 20+ individual steps. Multiply that across dozens of citation gaps and you're looking at a serious operational lift.

We have a blog post on how to run this process yourself, plus a free templated spreadsheet to track progress.

Schema markup. Answer-first content structure. Clear entity signals. Fast, crawlable site. LLMs still pull from primary sources especially for long-tail queries where you have something unique to say.

On-site SEO is the floor.

It just isn't the end-all-be-all anymore.

The audit (Step 1) and on-site work (Step 4) is probably manageable in-house.

The citation mapping and placement (Steps 2 and 3)? That's where teams hit a wall.

Noble automates the outreach, negotiation, and payment to get your brand into the sources that power AI answers across Google, ChatGPT, and Perplexity.

We find the gaps and handle placement so you show up in the answers your prospects are actually reading.

Want to know more? Talk to us.

About Noble Noble is an AI search platform that automates the outreach, negotiation, and payment required to get your brand mentioned in the sources LLMs cite. The result: you show up in AI-generated answers.

GET DISCOVERED ON