.png)

Three structural reasons legacy distribution falls short and the distinction between being cited and being named in the answer.

TL;DR: PR newswires were built to distribute press releases across media networks. LLMs were built to synthesize answers from sources they already trust. These are different systems with almost no overlap. Newswires target the wrong sources, syndication volume doesn't register as multiple mentions, and new articles carry less weight than existing cited content. The real goal isn't just getting cited as a source; it's getting your brand mentioned within the sources LLMs cite, so you show up in the answer itself.

In this post

A growing number of companies that were built for traditional PR distribution are now pitching themselves as AI search visibility solutions. The packaging is new, but the underlying model is the same: distribute content across a wide network and hope that volume translates to visibility.

It doesn't. And there are three structural reasons why.

This post breaks down each of them, plus a fourth distinction between getting cited as a source and getting your brand named in the answer — that changes how you should think about the goal itself.

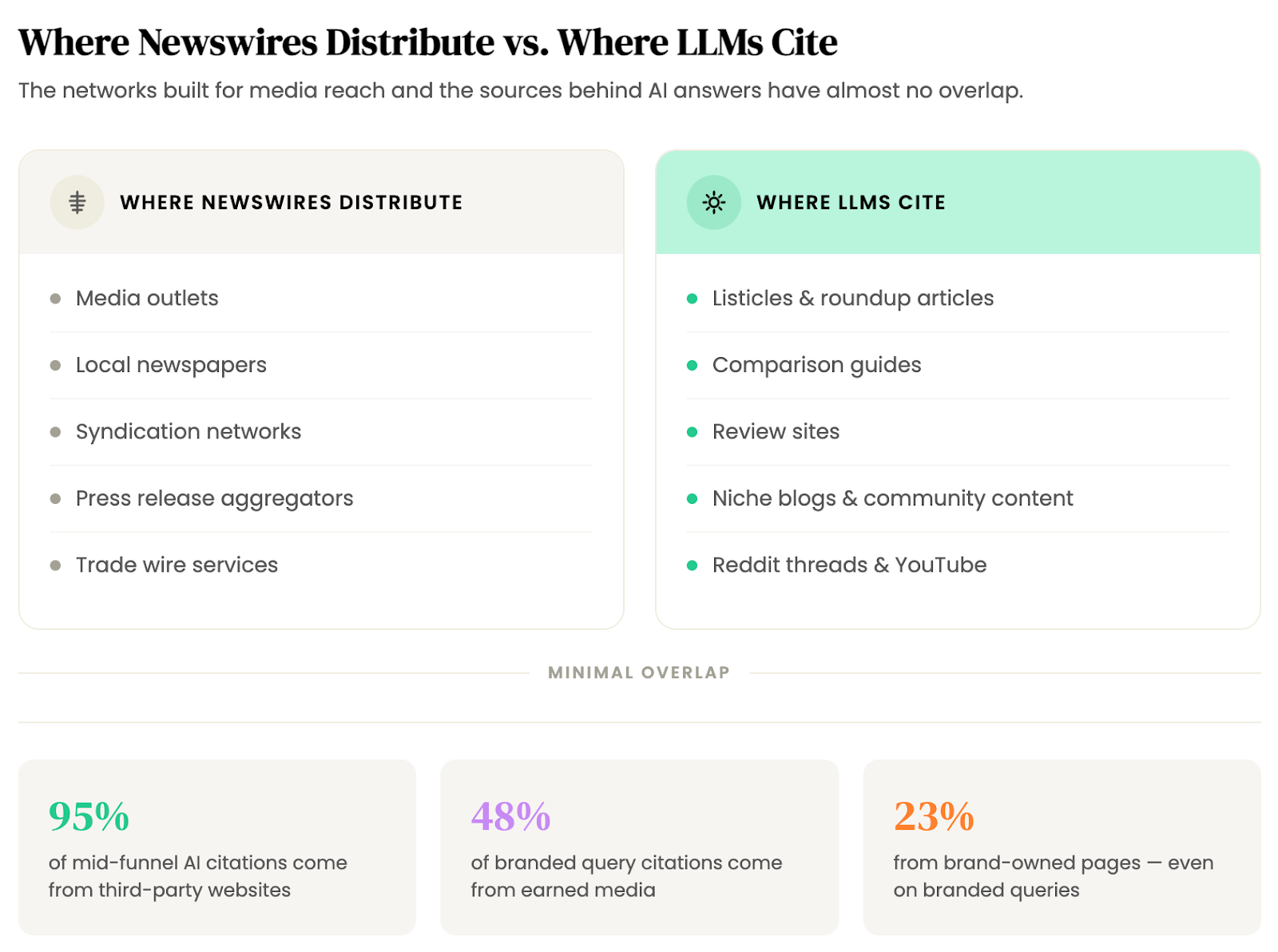

PR newswires distribute your content to media outlets, local newspapers, and syndication partners. That network was built for traditional media reach, and it still works for that purpose.

But LLMs don't pull mid-funnel answers from those sources. When a buyer asks ChatGPT or Perplexity "what's the best project management tool for remote teams?" or "top treasury management platforms," the sources behind those answers are listicles, comparison guides, review sites, niche blogs, Reddit threads, and YouTube videos.

Not press releases or local news pickups.

The data backs this up. OtterlyAI analyzed more than 1 million AI-generated citations across ChatGPT, Perplexity, and Google AI Overviews and found that 95% of sources for mid-funnel searches are third-party websites rather than brand-owned pages.

But "third-party" doesn't mean "any third-party site." It means the specific types of content LLMs prefer: editorial roundups, community discussions, comparison pages. Newswire distribution networks don't place you in these.

An Omniscient Digital study of 23,000+ AI citations reinforces the point. Even for branded queries (where you'd expect a brand's own content to dominate), 48% of citations come from earned media like editorial features, reviews, and community content, while just 23% come from brand-owned pages. News and press releases showed among the lowest citation rates of all content types studied.

The mismatch is structural…

Newswires place your content where journalists look.

LLMs pull from where buyers research.

Those are different places.

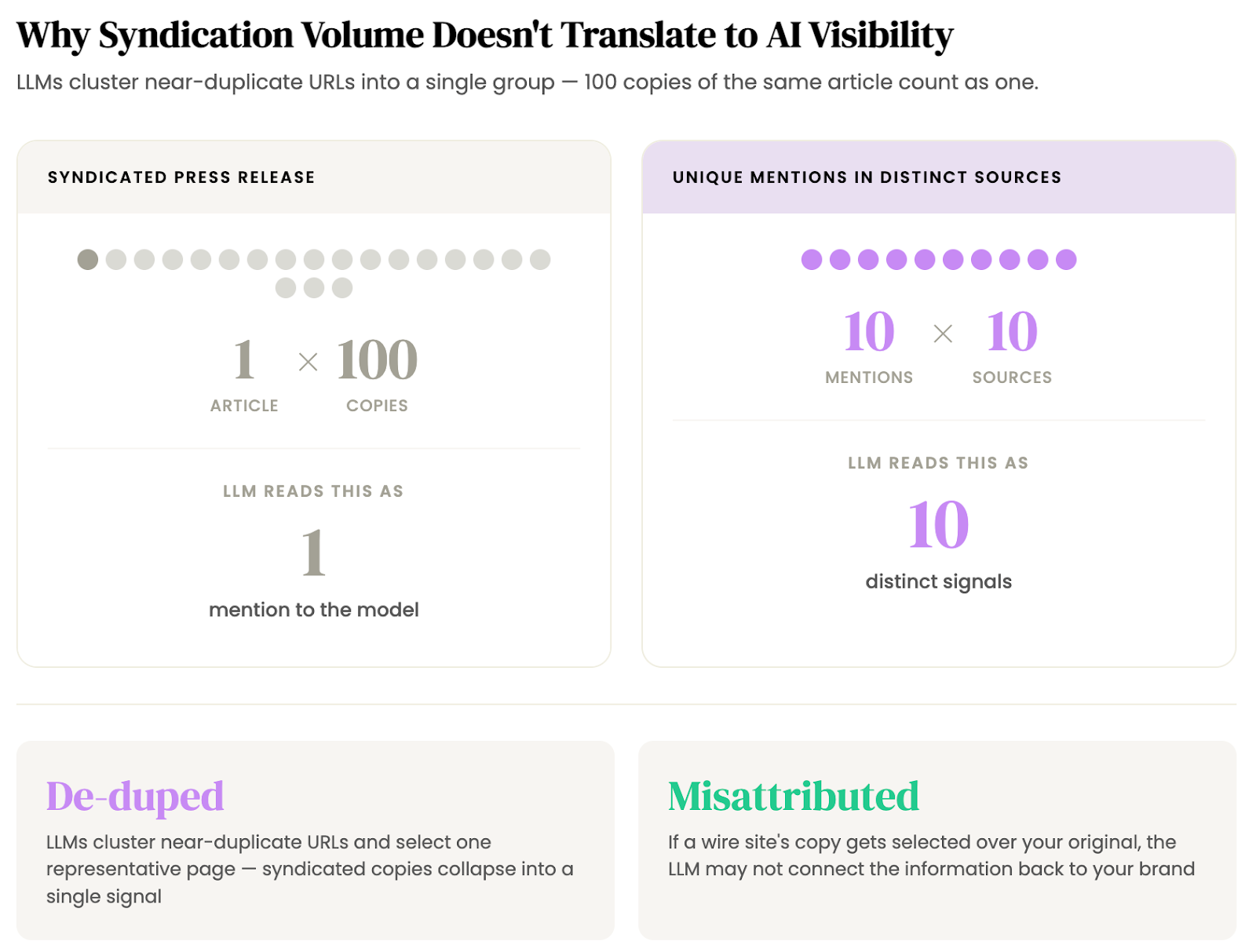

The core value proposition of newswire distribution is reach: your press release appears on hundreds of sites simultaneously. For traditional PR, that matters, but for AI search visibility, it's a non-factor.

One article syndicated 100 times is one mention. Not 100.

Microsoft confirmed this in December 2025 guidance on the Bing Webmaster Blog. LLMs cluster near-duplicate URLs into a single group and select one representative page. If the differences between pages are minimal, the model may select a version that's outdated or not the one you intended to highlight. And when it comes to syndicated content specifically, Microsoft noted that identical copies across domains make it harder for AI systems to identify the original source.

This means syndication doesn't just fail to multiply your visibility, it can actively work against you. If a newswire site's copy gets selected as the representative page over your original, the LLM may attribute the information without connecting it back to your brand at all.

The point is: Ten identical copies of a press release across ten wire sites collapse into one.

Ten unique mentions across ten different comparison guides are ten separate signals.

Even if a newswire could place your content in the right kinds of sources (it can't, for the reasons above), there's a third structural problem: newswires create new content by definition.

A new press release, a new article, a new URL. But LLMs already have sources they rely on for specific query types. Those sources have accumulated trust signals over time: citation history, cross-referencing patterns, structural familiarity. A brand-new article starts from zero on all of these.

AirOps studied more than 45,000 citations across 800 queries and found that brands earning both a mention and a citation are 40% more likely to resurface across consecutive AI responses than brands with citations alone. Only about 30% of brands maintain back-to-back visibility in AI answers.

Visibility is probabilistic, not permanent. Every time the model regenerates an answer, it re-samples from its source pool.

That means your best strategy is to be in more of the sources already in that pool. Not to publish new content and hope the model eventually picks it up.

Noble's client work shows this in practice. In one pilot, Noble placed a leading sales tech company in 43 articles that LLMs were already citing for "AI sales tools" queries. The result: share of voice increased 313%, from 2.3% to 9.5%, and the client overtook three direct competitors.

In a separate engagement, Noble placed a fintech client in 9 targeted articles already appearing in LLM citations for treasury-related queries. Visibility lifted 33% over three months, with the highest-performing placements coming from high-authority pages that already had multiple LLM citations.

Neither campaign published new articles. They placed the brand into existing content the models already trusted.

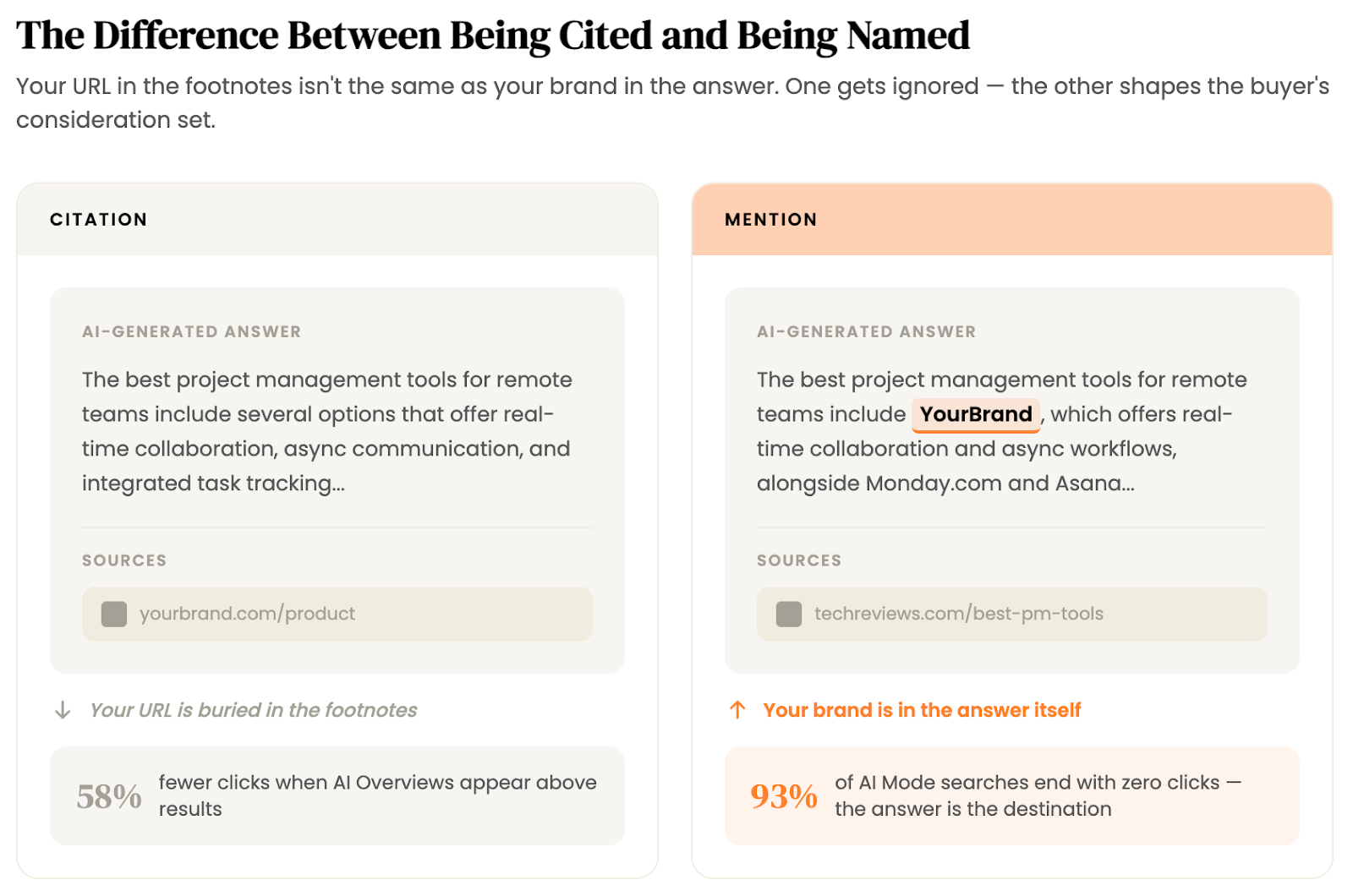

The three problems above explain why the newswire model falls short. But there's a more fundamental issue with how most companies frame "AI search visibility" in the first place.

Most of the conversation conflates two different outcomes.

The first is getting cited: your URL appears as a source link in the footnotes of an AI answer.

The second is getting mentioned: your brand is named in the body of the answer itself. These are not the same thing, and understanding the difference is critical to choosing the right approach.

Getting cited as a source isn't worthless. It means the LLM trusts your content enough to reference it. But a citation alone doesn't guarantee your brand shows up in the answer the user actually reads. The model might cite your article as a source while recommending a competitor by name.

This matters more in a zero-click environment.

Ahrefs' December 2025 study found that AI Overviews now reduce click-through rates for top-ranking pages by 58%, up from 34.5% when they first measured in April 2025. In Google's AI Mode, Semrush found that roughly 93% of searches end without any click at all. Across all Google searches in the US, about 58.5% now end without a click to any external site.

When fewer people are clicking source links, the value of a citation shifts. It's still a trust signal for the model, but the direct visibility comes from being mentioned in the answer where the user's attention actually is.

The higher-value outcome is getting your brand named within the sources LLMs rely on. When your brand appears in the listicles, roundups, and comparison guides that models already trust and cite, that mention carries into the answer itself. The user sees your name. Now, your brand is on the shortlist.

AirOps found that brands earning both a mention and a citation are 40% more likely to resurface across consecutive AI runs than brands with citations alone. Only about 28% of AI responses include brands that are both mentioned and cited, which means the dual signal is uncommon but disproportionately powerful when it happens.

This is the core distinction. PR newswires can, at best, generate citations — your URL might end up in the model's source pool. But they're structurally weak at getting your brand mentioned by name within the sources that matter.

And it's the mention that puts you in the answer.

If you're currently relying on newswire distribution for AI search visibility, or evaluating a vendor that's pitching this approach, here's a more effective framework:

If you want to run through this process yourself, we have an in-depth step-by-step playbook you can check out here. We’ve also written a comparison of the top AI search monitoring tools if you've started this process but need stronger insights.

Noble automates the hard parts of this process: citation audits to find the gaps, outreach and negotiation with publishers, and placement and payment to get your brand mentioned in the sources LLMs actually cite.

Want to get started? Talk to us.

About Noble: Noble is an AI search platform that automates the outreach, negotiation, and payment required to get your brand mentioned in the sources LLMs cite. The result: you show up in AI-generated answers.

GET DISCOVERED ON